AI in Regulated Industries: Lessons from Building a Legal AI - Malakah

Regulated AI

AI in Regulated Industries: Lessons from Building a Legal AI - Malakah

01 Sep, 2025

3 min read

Artificial Intelligence has already transformed consumer technology, but its impact in regulated industries, such as law, healthcare, and finance, is far more complex. These domains require not only intelligence but also accountability, compliance, and explainability.

Over the past year, we’ve been building Malakah, an AI hub specialized in Arabic legal systems. The journey has underscored a central truth: building AI for regulated industries is less about raw intelligence and more about trust, governance, and human oversight. In this article, I’ll share lessons from our experience and connect them with the broader challenges enterprises face when adopting AI in high-stakes contexts.

1. The Regulatory Backdrop

The urgency around AI regulation has accelerated worldwide:

- The EU AI Act (effective August 1, 2024) sets risk-based categories for AI, with “high-risk” applications, including legal AI, facing strict compliance obligations.

- The Council of Europe’s AI Convention (adopted September 2024) emphasizes transparency, human rights, and accountability.

- In the UK, Garfield.Law became the first regulated AI legal services firm, demonstrating that regulation and AI adoption can coexist effectively. (Garfield AI)

- The Saudi Data & AI Authority (SDAIA) has issued non-binding AI Ethics Principles, signaling expectations around fairness, transparency, and auditability. Enforcement mechanisms are under development. (Arab News)

- The Saudi Ministry of Justice launched Najiz, an e-service platform to facilitate the public in legal matters. (Najiz)

These frameworks aren’t abstract; they shape how legal AI must be designed, audited, and monitored.

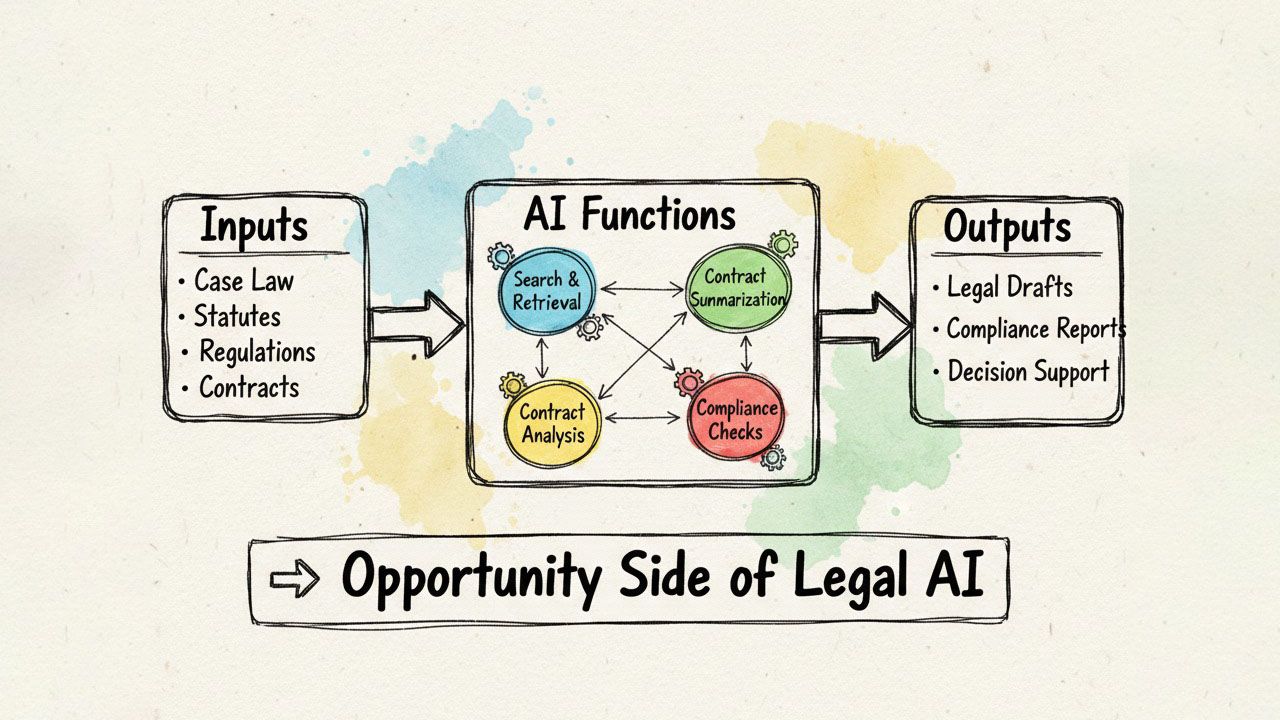

2. The Opportunities in Legal AI

Despite challenges, the potential is massive:

- Research & Analysis: Automating case law review, precedent search, and statute interpretation.

- Contracts & Compliance: Drafting, reviewing, and ensuring regulatory alignment at scale.

- Access to Justice: AI-powered assistants lowering barriers for individuals navigating complex legal systems.

Early adoption has already shown that legal AI can save lawyers countless hours while improving consistency and access.

3. The Risks: Why Regulation Matters

Legal AI carries risks unlike any other industry:

- Hallucinations: AI models sometimes fabricate citations or cases, a critical risk in legal proceedings. A UK barrister recently faced sanctions for citing fictitious case law generated by AI. (AP News)

- Bias & Fairness: Legal systems are already shaped by historic inequalities. AI trained on unbalanced data can amplify them.

- Lawyer Resistance: Surveys show 83% of lawyers consider using AI to provide legal advice would constitute an inappropriate use case of the technology.. (Reuters)

- Auditability: Enterprises adopting AI demand not only smart answers, but traceable reasoning and evidence.

These risks underline why compliance-by-design is not optional, but fundamental.

4. Lessons from Building Malakah

When developing Malakah, we encountered these challenges first-hand. A few lessons stand out:

1. Compliance-by-Design

From day one, we aligned our workflows with the EU AI Act’s principles: human oversight, transparency, and risk assessment. This ensured Malakah wasn’t just functional, but also compliant.

2. Data Governance

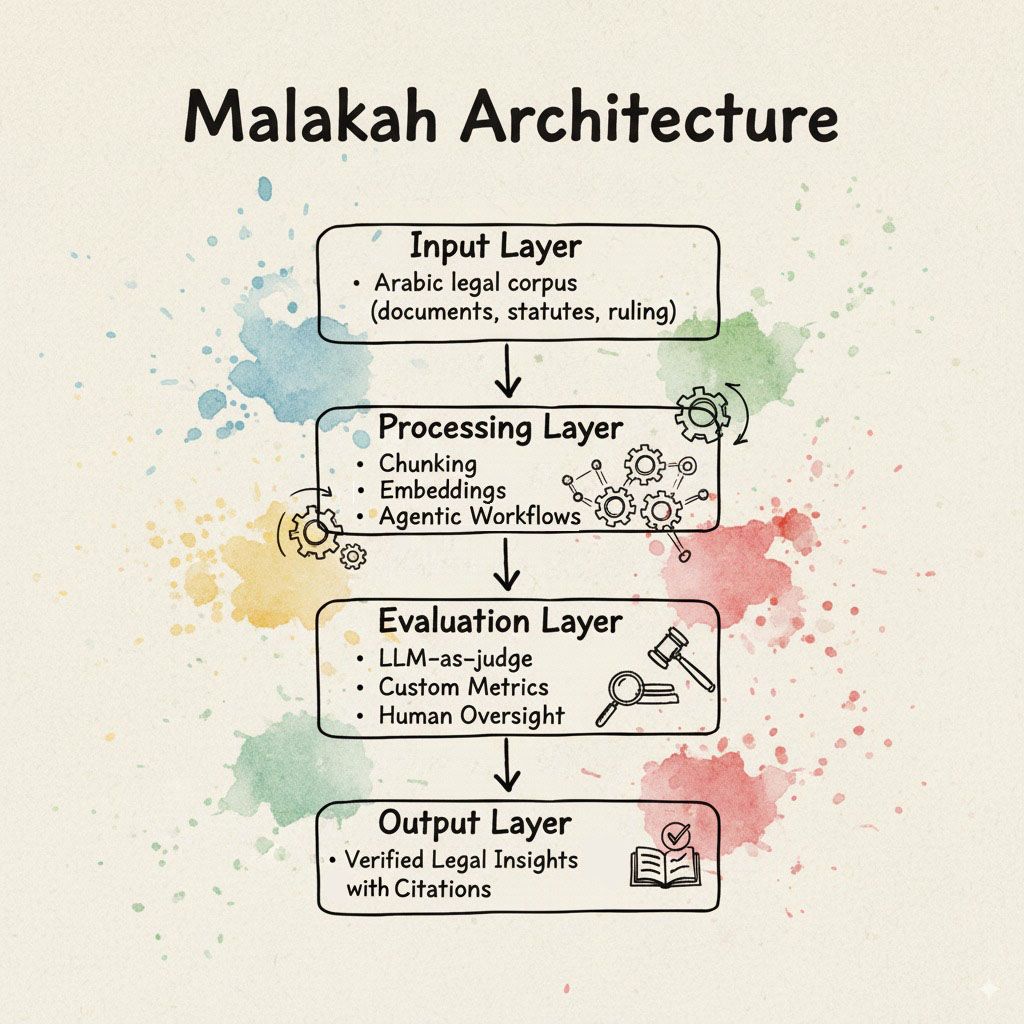

The Arabic legal corpus posed unique challenges: fragmented sources, inconsistent formatting, and linguistic nuance. We had to design robust pipelines for corpus structuring, document chunking, embedding selection, and domain-tuned indexing, ensuring retrieval was precise and legally faithful.

3. Human-in-the-Loop

We made a deliberate choice: Malakah does not replace legal experts. Instead, it supports lawyers with evidence-backed suggestions, while leaving ultimate decisions to humans. For legal professionals, Malakah is there to move them up the value chain. For the public, it is an easy entry into the legal landscape, which can otherwise seem daunting.

4. Agentic Workflows

Instead of a static retrieval model, we built agentic workflows: multi-step reasoning chains that dynamically select strategies (search, summarization, clause comparison, cross-document verification). This made outputs more reliable and context-aware.

5. Evaluation Pipeline (our hardest but most valuable investment)

Evaluation became our differentiator. We quickly realized that accuracy in regulated AI isn’t just about BLEU scores or token perplexity. We needed multi-layered evaluation:

- LLM-as-a-Judge: Large models compare outputs against references, scoring for faithfulness, coherence, and legal soundness.

- Custom Metrics: Precision/recall on statute citation, factual consistency checks, and embedding drift monitoring.

- Human Oversight: Legal professionals manually score edge cases, with feedback flowing back into model refinement.

This evaluation pipeline is still evolving, but it’s our strongest defense against hallucinations and untrustworthy outputs.

5. The Broader Blueprint for Regulated AI

Our journey with Malakah reflects a blueprint for any enterprise exploring AI in regulated sectors:

- Start with regulation in mind. Compliance is not a patch, it’s the foundation.

- Build AI literacy among staff. Regulation now often requires organizations to ensure employees understand the AI they use.

- Prioritize explainability. An AI system that can’t explain itself won’t survive in courtrooms, hospitals, or financial audits.

- Embrace global standards like the Council of Europe’s AI Convention to future-proof systems.

Conclusion

AI in regulated industries is not a race for the smartest model. It’s a race to build the most trustworthy, auditable, and human-aligned systems.

With Malakah, we’ve learned that the future of AI in law won’t be decided by raw capability, but by how well we integrate compliance, oversight, and resilience into the DNA of our systems.

In regulated industries, trust is the true competitive advantage.

In this article

- 1. The Regulatory Backdrop

- 2. The Opportunities in Legal AI

- 3. The Risks: Why Regulation Matters

- 4. Lessons from Building Malakah

- 1. Compliance-by-Design

- 2. Data Governance

- 3. Human-in-the-Loop

- 4. Agentic Workflows

- 5. Evaluation Pipeline (our hardest but most valuable investment)

- 5. The Broader Blueprint for Regulated AI

- Conclusion